If you’re a biotech founder, an investor running due diligence, or running a pre-IPO company, you’ve hired outside consultants this year — for IP analysis, KOL research, scientific due diligence, market positioning. They’ve signed your NDA. They’ve received decks, internal data, mechanism notes, unfiled IP positioning, and the kind of information your general counsel guards carefully.

Here’s a question worth asking: where, exactly, does that data go when they work on it?

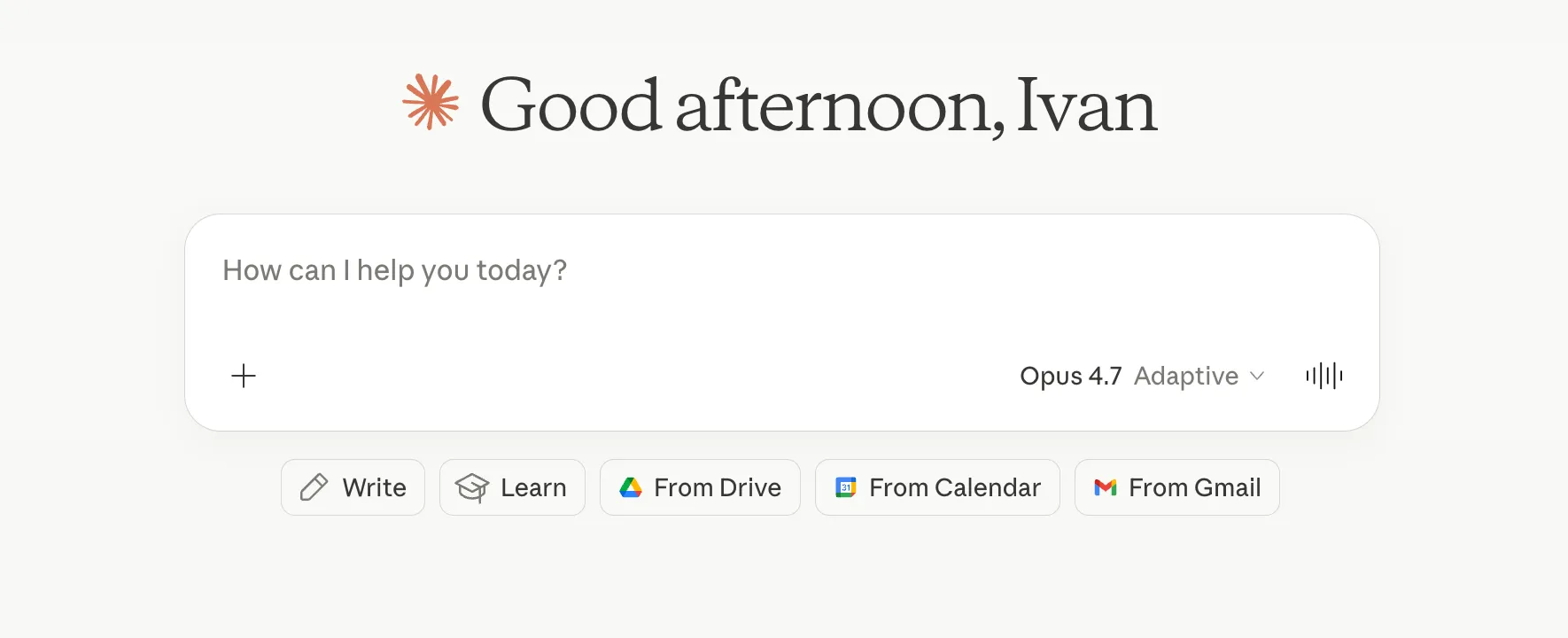

For an increasing number of consultants, the answer is into their personal $20/month Claude or ChatGPT subscription — $200/month if they pay for the most powerful tier. They paste your pre-IPO mechanism into the chat, ask the model for analysis, copy the output into your deliverable. They don’t think about it twice. The privacy policy says the vendor doesn’t train on consumer data. To them, that’s enough.

It’s not enough.

A privacy policy isn’t a contract

A privacy policy is a unilateral commitment by the vendor — Anthropic, OpenAI, anyone else. They can update it whenever they choose, with notice that may or may not be useful in retrospect. There’s no Data Processing Agreement (DPA) — the standard enterprise instrument — between them and your consultant covering your data. If your general counsel asks “what protects this information?” the honest answer is “Anthropic’s privacy policy says they don’t train on it.” That isn’t what enterprise legal teams expect to hear.

A contract is different. The Anthropic API is governed by Commercial Terms of Service that include this explicit line:

Anthropic may not train its models on Customer’s Inputs or Outputs.

That’s a contractual obligation, enforceable, with the usual remedies. Your general counsel can read it. The same distinction exists for OpenAI: consumer ChatGPT Plus runs on consumer terms; the API and the Team/Enterprise tiers run on enterprise terms with explicit no-training commitments and DPAs.

Same model, different legal envelope. A consultant working through the API gets the same Claude (Sonnet, Opus) as your $20-per-month personal subscription. The capability is identical. What changes is the contract around it. Your data flows through the same Anthropic infrastructure either way — but only one path has a contract that constrains what Anthropic can do with it.

What to ask any consultant you hire

Three questions that distinguish a thoughtful consultant from one who’s improvising:

- “Which AI tools will handle our data?” A specific answer is the bar — Anthropic API under Commercial Terms, ChatGPT Team, Azure OpenAI, local Llama via Ollama. A vague gesture toward “I use Claude” is a flag.

- “Is the tool governed by a contract that prohibits training on our data?” API tiers and enterprise subscriptions have this. Consumer subscriptions don’t.

- “What’s your setup for the most sensitive analysis?” A consultant working with regulated or pre-IPO industries should be running open-source models locally — Llama via Ollama on hardware they control — for the highest-confidentiality work. Data never leaves the machine.

If a consultant can’t answer all three crisply, you’re effectively trusting their personal habits with your IP positioning, your fundraising narrative, and your mechanism data. That’s a real exposure, even when everything goes fine.

What you don’t need to insist on

You don’t need to ask for AI prompt transcripts. The standard for any internal tool — word processors, version control, search engines, AI assistants — is that you’re paying for the deliverable and the analysis, not the toolchain. Asking for every prompt is similar to asking for every Google search. The reasonable ask is what tools, under what terms, not show me every keystroke.

You also don’t need to insist on using your enterprise AI environment. Most engagements don’t require it. But if your security policy specifies it, your consultant should accommodate cleanly — that’s within engagement scope, not an exception to it.

The professional baseline

The setup any consultant doing serious biotech work should already be running, in three layers:

- Local AI (open-source models like Llama via Ollama, on hardware they control) for the most sensitive analysis. Data never leaves the machine.

- Enterprise-tier API (Anthropic Commercial Terms or equivalent) for general research on public-domain materials — literature triage, market context, draft scaffolding.

- Personal AI subscriptions reserved for their own work — their writing, their coding, their own research. Never for client materials.

This is straightforward to set up. The reason it matters is not the technical complexity — it is the discipline. A consultant who hasn’t done this isn’t cost-constrained or technically blocked. They simply haven’t thought about it.

Why this matters now

The consulting market is a few years away from this becoming a basic table-stakes question — the kind every biotech buyer asks before hiring. For now, most consultants likely haven’t thought through it carefully, and most clients don’t ask. That gap is where mistakes happen — and the mistakes affect you, not the consultant.

The privacy policy says they don’t train on your data. The contract says they’re not allowed to. If you’re hiring someone to handle your most sensitive scientific or strategic work, ask which one your consultant is operating under.